Airflow Data Pipeline

A modern ELT pipeline using Dockerized PostgreSQL, dbt for transformations, and Airflow for orchestration. This project demonstrates how to build a scalable data pipeline that extracts raw data, transforms it into analytics-ready tables, and orchestrates the workflow with Airflow.

Project Overview

This project demonstrates a modern ELT pipeline built with Dockerized PostgreSQL databases, dbt, and Airflow for automated data transformations ready for analytics. Raw data is extracted from a source Postgres database, transformed using dbt, orchestrated with Airflow, containerized via Docker, and automated with Python.

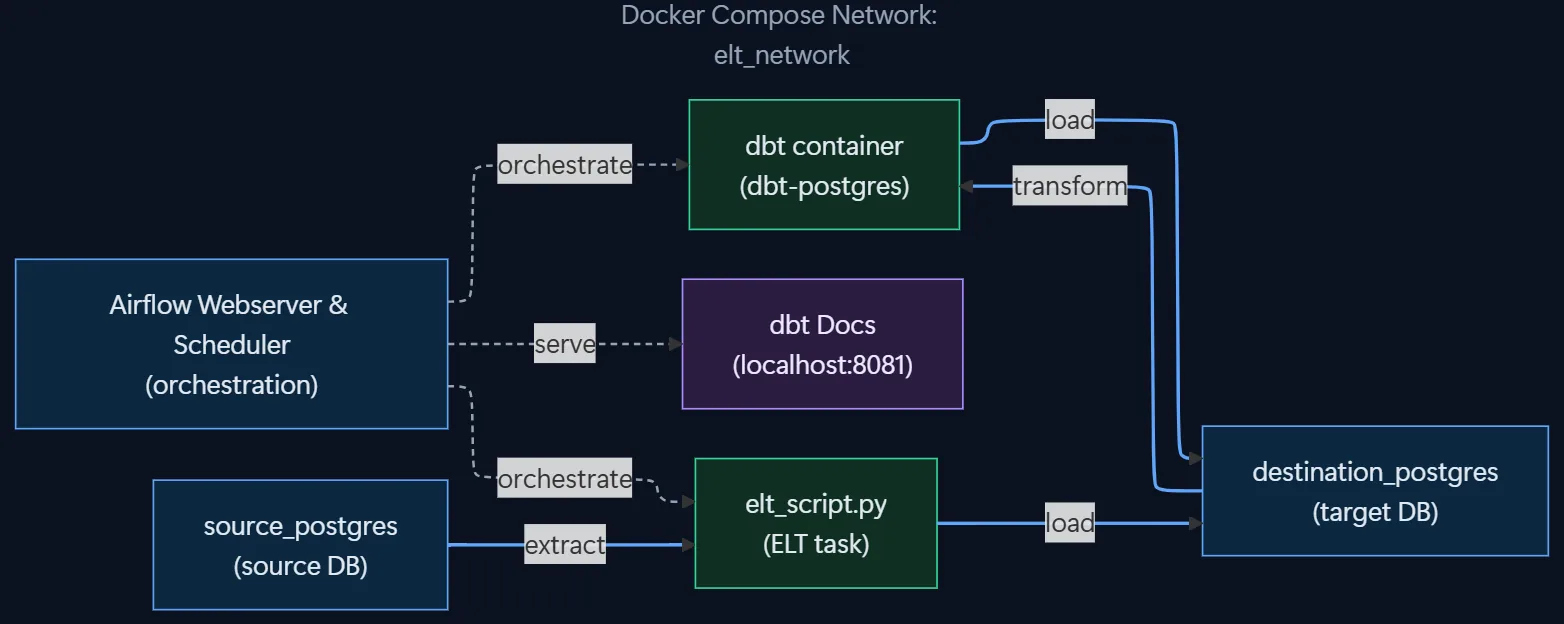

Architecture

The pipeline follows a modular, containerized architecture where each service plays a distinct role in the ELT process.

Components:

- Source Postgres → Simulates raw data (mock movies dataset).

- Python ELT Script → Extracts data from the source and loads it into a destination database.

- Destination Postgres → Stores processed and analytics-ready data.

- dbt → Transforms loaded data into models and builds lineage.

- Airflow → Orchestrates, schedules, and monitors the workflow.

- Docker Compose → Manages all services and networking.

Each component runs as an isolated Docker container, ensuring reproducibility across environments.

How It Works

Airflow (Orchestration)

Airflow manages the sequence of tasks across the pipeline from extraction to transformation.

Below is the DAG in Airflow, showing successful completion of each stage:

dbt (Transformation)

dbt handles the transformation layer, where SQL models are built on top of one another to create analytics-ready tables.

The dbt lineage graph below shows model dependencies from raw data to final analytical tables:

Docker (Containerization)

All components (databases, scripts, dbt, and Airflow) run together using Docker Compose.

The snippet below shows a successful startup of all containers:

Data Flow

- Data Generation →

init.sqlseeds the source Postgres with mock movie data. - Extract → Python script uses

pg_dumpto extract data from the source Postgres database. - Load → The same script loads data into the destination Postgres database.

- Transform → dbt executes SQL models to transform raw data into analytics-ready tables.

- Orchestrate → Airflow schedules, monitors, and validates this workflow using a DAG.

Setup Instructions

Clone and configure

git clone https://github.com/dorukalkan/postgres-dbt-airflow-pipeline.git

cd postgres-dbt-airflow-pipeline

cp .env.example .envDocker compose and run

docker compose up -dAccess components

- Airflow UI → http://localhost:8080

- Source Postgres → localhost:5433

- Destination Postgres → localhost:5434

- dbt → via container

docker compose run dbt run - dbt Docs → http://localhost:8081

Run the ELT pipeline manually

docker exec -it elt-elt_script-1 python /app/elt_script.py